Even though SQL Server capacity planning demands wide knowledge about data platform architecture, performance counters, external dependencies, SQL Server versions and editions, migration paths, hardware, virtualization, non-functional requirements, licensing etc. it is still a very mathematically oriented problem by its nature, especially on bigger environments consisting of 10 servers up to hundreds of servers or more. For these math problems we need a proper software.

Understanding behavioral patterns and trends for performance counters

One of the key points in estimating the capacity needs for the new data platform environment is to understand the trend for each performance counter, such as SQL Server instance RAM and CPU usage, database IOPS, log and data file sizes. This will make the predictions match more accurately the future computing power needs. You also should be able to extrapolate these time series against the best fitting trend and then compare them in a seasonal manner, whether it is a question of databases or SQL Server instances. Extrapolation is a mathematical term for modifying existing data set based on a given trend.

How different hardware affects the performance data

For performance counters, such as CPU usage, it is critical to understand what happens if we are going to change the underlying hardware or virtual machine configuration, or if we are migrating from on-premise into public cloud. It is easy to understand that there may be great differences between the source and target platform hardware performance. Therefore, we must be able to calculate things like CPU performance ratio between the current source server setup and hypothetical target server setup, in order to be able to extrapolate the instance or database level performance counter time series into a new norm. As you can see, the equation gets more complicated.

Controlling your SLA’s

One thing you should also be able to forecast is the service level for each of your SQL Server instance or database, from different aspects of the performance counters. The question is: what is the optimal capacity of the new data platform with desired level of SLA’s? This means you must be able to not only constraint your new data platform environment with some threshold values, such as “peaking CPU must not exceed the 80% limit on instance level on this server”, but also be able to constraint these threshold values by different kinds of SLA’s, for example: “peaking CPU must not exceed the 80% limit on instance level on this server 99.9% of the time”. This leaves you a possibility to calculate different service levels for different servers, instances and databases in your target platform.

Optimizing your SQL Server capacity usage

As we can see, even the basic migration scenarios need quite a lot of math. But what if, in addition to just finding out the actual capacity needs for a basic SQL Server migration scenario, you want to reach some savings by consolidating the target servers? This is the phase where machine learning kicks in. The question to be asked is: “what is the minimum number of servers and CPU cores needed in the target platform for the optimal stacking of the SQL Server instances and databases, without sacrificing performance and availability?” Machine learning is really the key to solve this equation in the most efficient way, because it is practically impossible to make such calculations manually due to the amount of possible logical combinations in the calculation logic.

SQL Governor software will make capacity planning more accurate – and fun!

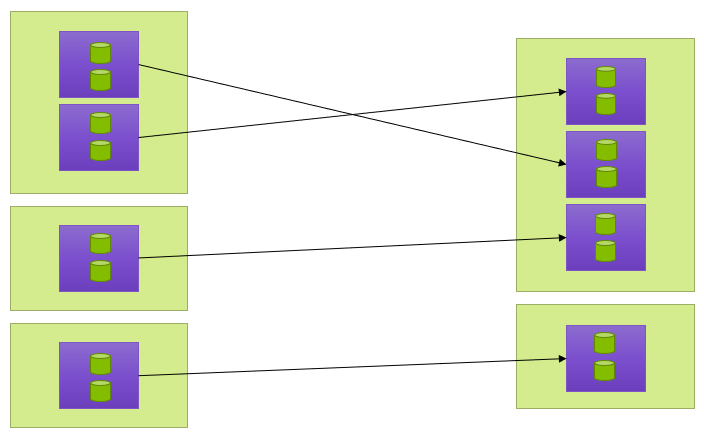

The SQL Governor software has a unique capacity optimization ability that is based on an international patented machine learning methodology. It analyses the workload data of the SQL Server instances, and based on this data automatically calculates the optimal configuration for the new platform by restacking the SQL Server instances in the most compact way. The optimization aims to generate considerable cost savings by minimizing the required server resources over the entire lifecycle of the system.

This picture shows the restacking of the instances from the source platform to the target platform. The light green boxes are the servers and the purple boxes are the instances.

How SQL Governor works (warning: the geeky stuff is here!)

So how was SQL Governor invented? One day when I was thinking about SQL Server instance workloads, I got an insight into performance counters, such as CPU usage. Even though the total workload of SQL Server instances may be exactly the same between two servers, the amount of maximum capacity need depends totally on the individual time series of the SQL Server instances over time. This same ideology works on database level as well. This means that the same total capacity usage may still lead into very different maximum capacity needs at the server level.

My second finding was that the great majority of all SQL Server instances are very behavioral over time. This gives a great opportunity for time series optimization. The key is to find which performance counter time series have the best fit, in order to stack their corresponding SQL Server instances under the same server into a consolidated server schema. This is possible, because the instances are not competing the same hardware or virtualization resources at the same time, and therefore it minimizes the sleeping CPU cycles over time. In Governor, this optimization work is effectively done by machine learning.

Got interested? Read more about SQL Governor and how to save up to 50% in your data platform costs.

Jani K. Savolainen

Founder & CTO

DB Pro Oy